"Fixing" massive parallel builds on Azure Pipelines with TFVC

Last weekend I helped migrate a client from Team Foundation Server 2017 to Azure DevOps in the cloud. One of the many reasons to migrate was the ability to leverage the hosted pool to do builds and not having to maintain build servers.

We did 3 dry-run migrations, tested many build pipelines and found no issues. We then commenced with the production migration and were hit by some strange error messages in one of our pipelines that relies on massive parallelization.

In the meantime a number of changes are rolling out to address the issues raised in this post:

- ✅https://github.com/microsoft/azure-pipelines-agent/pull/3589

- ✅https://github.com/microsoft/azure-pipelines-agent/pull/3586

- ✅https://github.com/microsoft/azure-pipelines-agent/pull/3578

- ✅https://github.com/microsoft/azure-pipelines-agent/issues/3632

Azure Pipelines Agent 2.200.0 should resolve the issues that are described in this post.

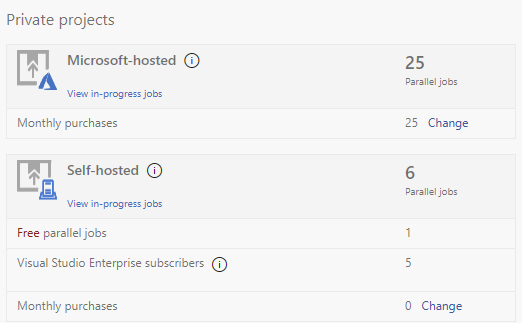

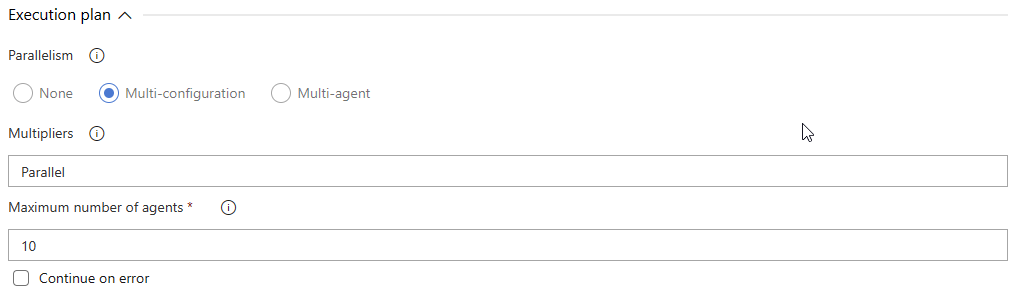

This pipeline takes a comma separated list of environments to build a package for and then does that on as many agents it can get its hand on. In the case of this client: 25 hosted agents.

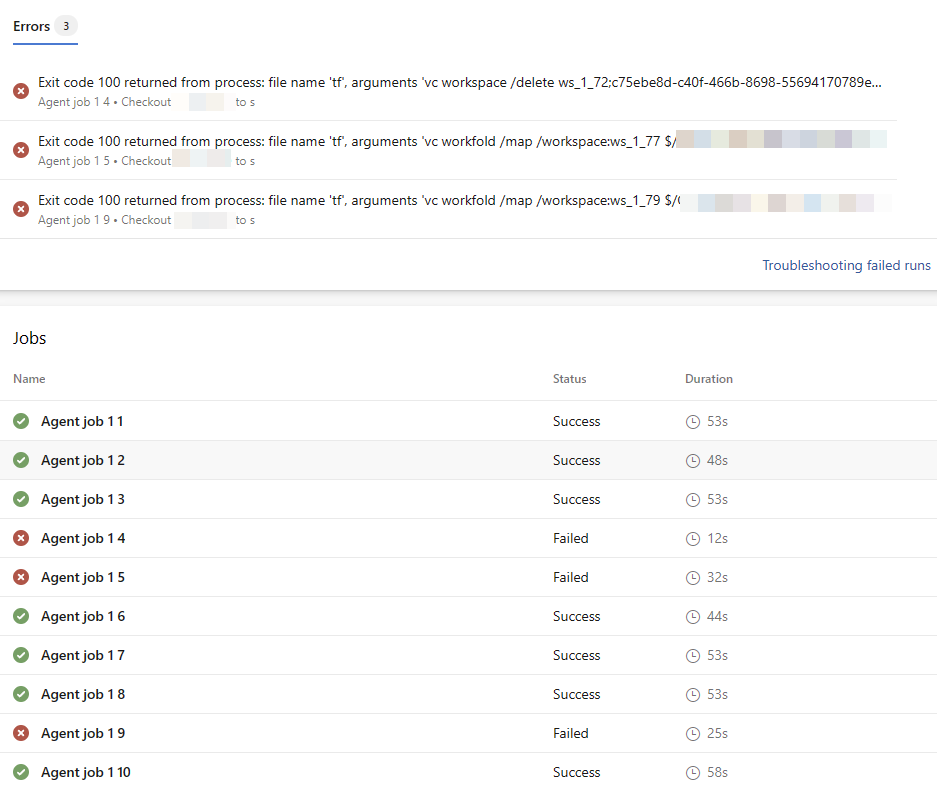

About 30% of the jobs failed. All failed jobs broke during checkout and multiple different errors were written to the log:

The working folder D:\a\1\s\ is already in use by the workspace ws_1_72;Project Collection Build Service on computer WIN-A02U1P0K0DU.And:

TF14061: The workspace ws_1_73;Project Collection Build Service does not exist. While inspecting the other jobs in the pipeline, we found that multiple jobs were executed, at the same time, by an agent with the same name. Up to 4 builds in parallel.

I submitted this case to the Azure DevOps Developer Community. You can track its progress here.

We initially thought this was a bug related to multi-configuration builds and indeed, when running normal build individually we didn't see any issues.

But when Monday arrived and teams started checking-in their changes, we quickly discovered that this issue happened also when many CI builds triggered on the same code change, but on otherwise unrelated build definitions. At this point we opened a support ticket with the Azure DevOps support team and I started poking around my contact list, slack and twitter to find out more.

From what I understand this issue is caused by a subset of hosted agents that share the same virtual machine. While standard agents run on a D2v2 Virtual Machine in Azure, other agents run on more beefy hardware using nested-virtualization. That way a single heavy VM can securely host multiple agents. And for some reason I do not fully understand yet, all of the agents on this VM share the machine name of the host machine.

Why is this a problem?

you may ask...

Let's start with explaining that for most people who're using Git, this won't ever be a problem. And for people using TFVC it may not occur when build aren't triggering around the same time, when you have a small workspace and when you use 1-4 hosted agents at max. The shorter your checkout task takes, the lower your chances of this causing a problem.

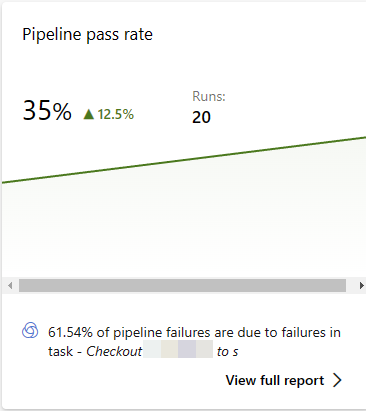

But if you do have large workspaces, many builds triggering at once and are using many agents in parallel, the chances of you hitting this but will increase massively. During really busy times we have seen op to 30% of our builds failing due to this problem, some cases failing almost 80% of the time when relying on multi-configuration.

What exactly causes it?

When a TFVC (Team Foundation Version Control) workspace is created, it is registered on the server (in our case Azure DevOps). The registration includes:

- Hostname

- Workspace name

- Username

- 1+ work folder mappings

At any given time no workspace can be created that maps the same folder on the same computer (hostname). So when 2 or more agents start at exactly the same time, one will be the first to successfully register and map the $Build.SoucesDirectory and the other ones will fail with:

The working folder D:\a\1\s\ is already in use by the workspace ws_1_72;Project Collection Build Service on computer WIN-A02U1P0K0DU.To prevent from bumping into this issue in case a previous build got interrupted, the agent starts every TFVC job with a check for existing workspaces and will try to remove these before creating a new workspace. When this happens while another build is still checking out sources it will be hit by:

TF14061: The workspace ws_1_73;Project Collection Build Service does not exist. Since the hosted agents always try to checkout their sources at the same location: D:\a\1\s\ and since nested virtual Azure Pipelines agents share the same name, they have a high likelihood oof running into this bug when they start at the same time and when the checkout process takes a while.

With this particular client the checkout can take a few minutes and their setup is likely to queue multiple CI builds when certain files change. And with 25 parallel pipelines the chances of hitting this bug are quite high.

Solving the issue

Ideally Microsoft solves the issue with a change in the way Azure Pipelines hosted agents work. I can see multiple ways out of this problem from Microsoft's side:

- Ensure all hosted agents have a unique hostname

- Ensure no 2 agents with the same name are ever active on the same Azure DevOps Organization by adjusting the Hosted Queue scheduling algorithm.

- Change the working directory for each agent on nested virtual agents sharing the same host. Currently rolling out.

- Let the agent query the agent pool and delay the checkout until previous jobs on agents with the same name have finished

- Detect these error messages and automatically retry the checkout task upon failure (instead of retrying the last command 3 times).

And there are multiple options for my client:

- Spin up a Scale-set Agent in Azure and host our own 25 agents

Pros: faster builds, incremental builds

Cons: more expensive, VM maintenance - Move to Git (is planned anyway)

Pros: easier to hire new talent, faster builds, modern version control system

Cons: takes time to implement, not everything can move at once, training required for people on the teams - Change the hostname of the agent at runtime.

Pros: Super fast, simple

Cons: Unknown impact on running processes, normally requires reboot of server (not possible). - Let the job query the agent pool and delay the checkout until previous jobs on agents with the same name have finished.

Pros: Can be implemented quickly, fully under our control

Cons: Needs to be added to every build that triggers on a TFVC repo (we can fix that), adds time to every build that runs (we'll take the wait over a failing build).

As you might imagine, we opted for the last solution.

The solution

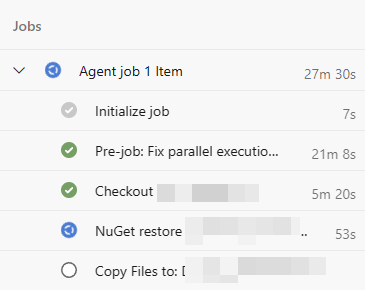

So, we ended up writing a custom task for Azure Pipelines to query the other jobs running on the same Agent Pool to see if any other jobs are running on an agent with the same name. If we detect such jobs we see which job has the lowest BuildId and JobId and yield priority to them. This requires all builds on the same agent pool to include this task. All builds must obey to the same rule, or we'll end up with collisions anyway.

To do this I ended up downloading the timeline for each running build, find all running jobs and see whether these jobs intend to perform a TFVC Checkout. If they are and use the same hostname we compare the BuildId and JobId and yield of the other job's Ids are lower than ours. Yielding simply means: waiting 15 seconds and trying again:

function must-yield

{

$runs = get-runs -top 50

foreach ($run in $runs)

{

$timeline = get-timeline -run $run

$jobs = get-jobs -timeline $timeline

foreach ($job in $jobs)

{

if (is-hostedjob $job)

{

if ((-not ($run.Id -eq $buildId -and $job.id -eq $jobId)) -and (has-checkout -job $job -timeline $timeline))

{

if ((get-hostname -timeline $timeline -job $job) -eq $currentHostname)

{

if (-not (hasfinished-checkout -timeline $timeline -job $job))

{

if ($run.Id -lt $buildId -or ($run.Id -eq $buildId -and $job.id -lt $jobId))

{

Write-VstsTaskWarning "Two agents with the same hostname detected. Waiting 15 seconds..."

return $true

}

}

}

}

}

}

}

return $false

}

while (must-yield)

{

Start-Sleep -seconds 15

}While the process is pretty ugly, for now it works and our pass-rate has gone back up to acceptable levels. I'm sure we'll run into a few more corner cases and will update the task a few more times in the near future. If you have any tips how we can get the following questions answered with less ugly code, I'm happy to accept tips or pull requests:

- Is a job running on a hosted agent?

- What's the hostname of the agent running each job?

- Is the job trying to checkout sources from TFVC?

- Has the job finished checking out sources from TFVC?

The next problem we needed to solve was to include the task in every build on the organization. Manually changing all 760 builds was of course an option, but there is a much easier way to accomplish the same thing in Azure Pipelines.

To ensure all builds obey the right-of-way rule we wrote a very simple Azure Pipelines Decorator extension that injects the task into every build:

"contributions": [

{

"id": "azure-pipelines-tfvc-fixparallel-decorator",

"type": "ms.azure-pipelines.pipeline-decorator",

"targets": [

"ms.azure-pipelines-agent-job.pre-job-tasks"

],

"properties": {

"template": "azure-pipelines-tfvc-fixparallel-decorator.yml"

}

}

],steps:

- task: tf-vc-fixparallel@2

displayName: 'Fix parallel execution on hosted agent.'Other issues

Apart from the issues described above, there are other "weird things" going on... I see the agent trying to delete workspaces that existed mere seconds before and failing:

tf vc workspaces ws_1_326 /computer:* /format:xml /collection:https://dev.azure.com/organization/ /loginType:OAuth /login:.,*** /noprompt

##[debug]<Workspaces>

##[debug] <Workspace computer="fv-az423-207" islocal="true" name="ws_1_326" ownerdisp="Project Collection Build Service (organization)" ownerid="ab21bc76-eb45-4c55-bc13-70ab5137d5ad:Build:03bfd66a-1ced-4a3f-b479-186dc2c894f0" ownertype="Microsoft.TeamFoundation.ServiceIdentity" owner="c75ebe8d-c40f-466b-8698-55694170789e" owneruniq="c75ebe8d-c40f-466b-8698-55694170789e">

##[debug] <Comment />

##[debug] <Folders>

##[debug] <WorkingFolder local="D:\a\1\s" item="$/Project" />

##[debug] </Folders>

##[debug] <LastAccessDate>2021-10-29T22:55:06.777+00:00</LastAccessDate>

##[debug] <OwnerAliases>

##[debug] <string>Build\03bfd66a-1ced-4a3f-b479-186dc2c894f0</string>

##[debug] <string>03bfd66a-1ced-4a3f-b479-186dc2c894f0</string>

##[debug] <string>Project Collection Build Service (organization)</string>

##[debug] </OwnerAliases>

##[debug] </Workspace>

##[debug]</Workspaces>

tf vc workspace /delete ws_1_326%3Bc75ebe8d-c40f-466b-8698-55694170789e /collection:https://dev.azure.com/organization/ /loginType:OAuth /login:.,*** /noprompt

TF14061: The workspace ws_1_326;Project Collection Build Service (organization) does not exist.The Agent will fail to delete the "missing" workspace, try 3 times and then fail the job. I've submitted a pull request to ignore the phantom delete failure, but in the mean time I've extended the wait-task to also try to delete the offending workspaces. This has reduced failure rates even further:

Write-Host "Cleaning up workspaces..."

$tf = [System.IO.Path]::Combine($agentHomeDirectory, "externals", "tf", "tf.exe")

$workspaces = [xml] (& $tf vc workspaces $desiredWorkspace /computer:* /format:xml /collection:$org /loginType:OAuth /login:.,$vssCredential /noprompt)

foreach ($workspace in $workspaces.SelectNodes("Workspaces/*"))

{

Write-Host "Deleting: $desiredWorkspace;$($workspace.owner) on $($workspace.computer)"

& $tf vc workspace /delete "$desiredWorkspace;$($workspace.owner)" /collection:$org /loginType:OAuth /login:.,$vssCredential /noprompt

}

$workspaces = [xml] (& $tf vc workspaces /format:xml /collection:$org /computer:$currentHostname /loginType:OAuth /login:.,$vssCredential /noprompt)

foreach ($workspace in $workspaces.SelectNodes("Workspaces/*"))

{

if ($workspace.name -like "ws_$(Split-Path $agentBuildDirectory -leaf)_*")

{

Write-Host "Deleting: $($workspace.name);$($workspace.owner) on $($workspace.computer)"

& $tf vc workspace /delete "$($workspace.name);$($workspace.owner)" /collection:$org /loginType:OAuth /login:.,$vssCredential /noprompt

}

Conclusion

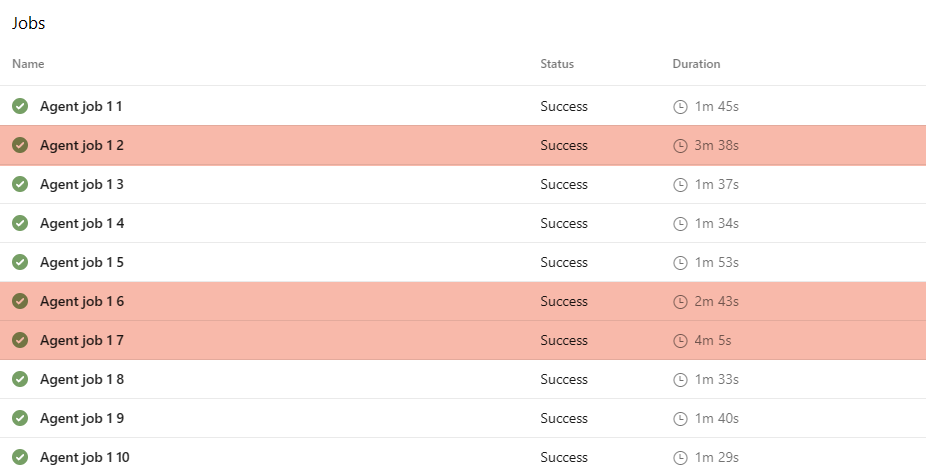

And now when we queue 25 builds all at once, some builds will queue nicely at the intersection and yield to the other traffic until it's safe to cross. Can you see which jobs were hit by this penalty?

And while it prevents the builds from breaking in 98% of the time, some are still heavily impacted by this bug:

If you're having this issue...

I'm hoping our investigation will soon result in a more permanent fix from the Azure Pipelines team, but until such time, if you need a fix for this problem, install the latest version of the TFVC Build Tasks and contact me to share the decorator with your organization.